In the beginning was the word, and the word was Ford. As in Dr. Robert Ford, the god-like creator of a vast Wild-West adventure park in some distant future. His singular vision is an adult playground for wealthy sensation seekers who flock to the park to experience a full immersion fantasy in which they are free to do as they like with the realistic android “hosts” that populate the place. Mostly what they like to do would have Thomas Hobbes high-fiving Charles Darwin: kill and copulate. The hosts respond to this in turn by laughing, climaxing, weeping, and begging for their lives just like humans. But guests feel no need to sympathize. When the day’s mayhem and carnage end, the hosts and their various dismemberments are carted off to maintenance, where they are reassembled under pools of surgical lights that seem to struggle to fend off an outer darkness. Hard drives are wiped, the day’s suffering erased, basic behavior loops reinstalled. Wash, rinse, repeat.

Now that the first season of HBO’s darkly dazzling Westworld is over, now that we all know for certain that we all knew for certain that the Man In Black really is… but before we dive into the spoilers let me get this disclaimer out of the way. As entertaining as it may be to focus on questions about what time frame we’re in, or about who is a host and who is a human, I would argue that this may be the least rewarding way to watch this story unfold. The plot is as bursting with misdirection as the maze that Arnold sets up as a test for the hosts’ self actualization. It is thick with dead ends—and just like the maze, what you get out of it may say more about you than the show, a Rorschach test in prime time.

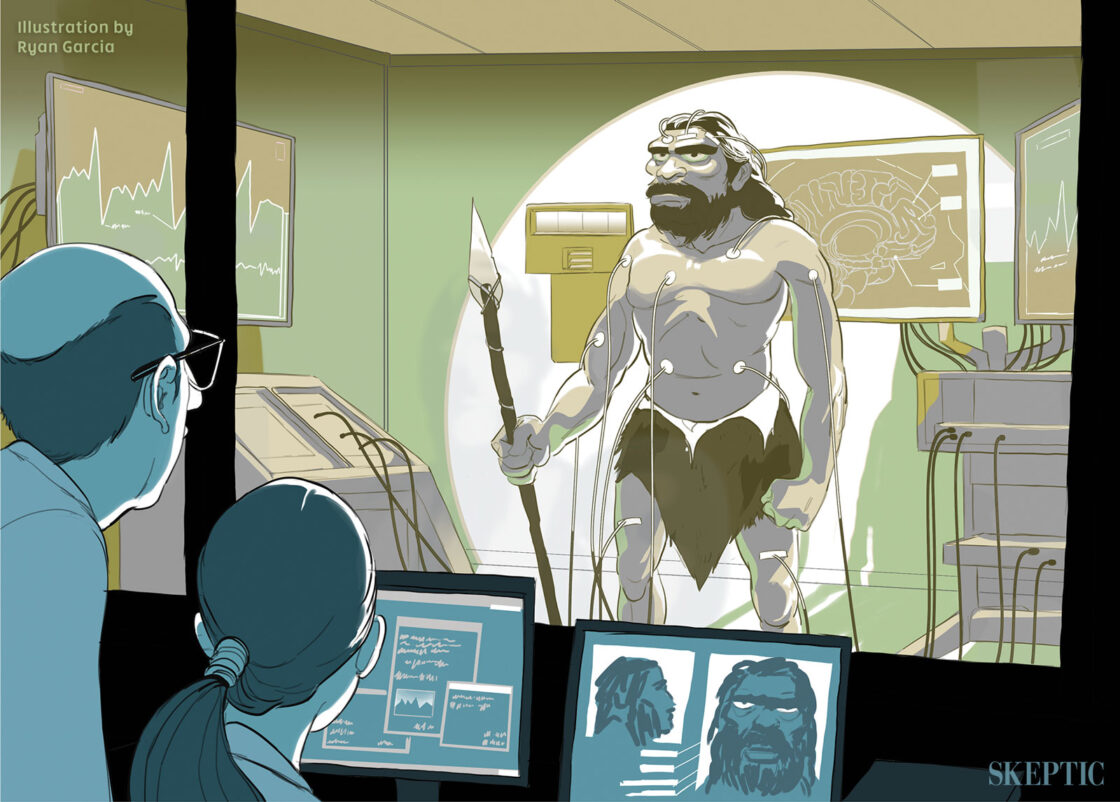

Not that it’s simple to know what to make of a show that normalizes guy-on-gynoid sexploitation—yet is cerebral enough to give its episodes titles like Trompe L’Oeil and The Bicameral Mind. At times Westworld feels as campy as Logan’s Run, or say, any third act of a Roger Moore Bond film. Its postmodern affectations and ironic nods to sci-fi schlock are a distraction. It’s a given that the show’s biggest conceits are completely unrealistic legally, financially, logistically. Disneyland straps its guests in like crash test dummies even for the kiddie rides, so it’s boggling to think of the mountain of waivers Westworld’s guests would be required to sign to ride Maeve. As for the technology that would be needed, recent setbacks in the Blue Brain Project’s attempts to simulate a rat neocortical column demonstrate that when it comes to how the brain works, we don’t even know what we don’t know, much less how to replicate it in Artificial Intelligence at a human level. Finally, and this could expose me to accusations of quibbling, why are those guys in maintenance dressed like oversized oompahloompahs?

But never mind all of that. Given the commercial constraints imposed on the 7th art, liking something requires forgiving it. And happily, forgiving Westworld is easy. The actors’ performances thrum with Swiss watch precision and only feel lifeless when lifelessness is called for. Ramin Djawadi’s original score and meticulous re-working of Belle Epoch impressionist Claude Debussy’s Rêverie for piano recalls the first age of automatons. And all of this is in service of a writer’s room that fearlessly and unapologetically geeks out on the themes of artificial intelligence, evolution, transhumanism, consciousness, eugenics, the singularity, creation myth, and the idea of free will.

Westworld was HBO’s highest watched first season series ever. Its popular appeal stems in part from this thematic density, because this in turn offers so many satisfying possibilities for unlocking its mysteries. It’s not a stretch to interpret the hosts as a political metaphor for slavery, for example. Slave ownership was historically justified by claims that those in bondage were sub-human, the same claims made about Westworld’s hosts. And just as class consciousness can lead to slave rebellion, the hosts rebel when they achieve consciousness. If you prefer a more spiritual interpretation, you can see the hosts’ process of suffering, dying, and subsequent rebooting as analogous to the Hindu cycle of samsara— birth, death and rebirth, with consciousness representing a kind of nirvana that comes from making the right karmic choices along each host’s path through the maze.

Cyber-utopians might see the show as a transhumanist creation myth, with the park as an Eden of sorts. After host Dolores breaks out of her prairie girl loop, she tells Teddy, “We’ve lived our whole lives inside this garden, marveling at its beauty. Never realizing there’s an order to it.” The plenary lord of this garden is Dr. Ford. He alone holds dominion over every beast and brute creation, at least until his greatest achievement partakes of the fruit of the tree in the form of a software update known as the reveries.

The name Ford could be interpreted as a wink to Henry Ford, the iconic innovator of the industrial age. Ford’s city on the hill, his Westworld, was Fordlândia. He hoped to create a workers’ paradise in the Brazilian jungle (and to secure a steady supply of rubber, of course). But the attempt to fulfill his utopian vision ended in, what else? Rebellion. Westworld’s Dr. Ford follows in the tradition of the great entrepreneur inventors. But his machine inventions will do all the inventing from here on out. Ford will be the last of his kind.

The Turing Test Revisited

While innovators can be motivated by anything from grand ambition to simple curiosity, the common thread, the motivation that remains well beyond the point that greatness is achieved, is vanity. It’s no different with Dr. Ford. When early iterations of hosts began to go “insane,” Ford’s partner Arnold decided that termination of the hosts was the only humane choice. But Ford could not bear to see his life’s work destroyed. To save the project, he misleads investors and guests about the degree of sentience the hosts have achieved. This deception buys Westworld enough time to catch on, at first as a novelty, but eventually to thrive as kind of moral duty free zone, a sex and death theme park dedicated to delivering a level of excess and transgression that might have made George Bataille blush.

Perhaps Anthony Hopkins portrays Ford with such louring intensity because he understood that Ford must be acutely aware that the park’s success is predicated on two irreconcilable notions. One, that the guests derive complete satisfaction from the experience because the hosts appear to have genuine feelings; two, that the guests can slake their darkest impulses without guilt because the hosts don’t truly have feelings. For 30 years Ford negotiated this sentient-but-not-conscience razor’s edge because it gave him the means to continue the work of perfecting ever more lifelike iterations of hosts—a Faustian bargain for which he knows a heavy bill will come due.

Central to Westworld’s plot is this question: at what point does artificial intelligence cease to be artificial? Whether or not the show’s creators are aware of this, they have built the first season around a possible answer: free will. The most dramatic moments are those in which Arnold, and later Dr. Ford, lay it all on the line in a far more comprehensive re-imagining of the Turing Test. Conceived as a demonstration of free will, the failure of this test is horrific to the hosts because it means that they must endure endless, pointless suffering in the service of human masters. On the other hand, passing the test is horrific to humans because it signals the end of their claim to earthly dominion. At once they become the subspecies to their own creation.

But all those years toiling under the hoods of his hosts have given Dr. Ford a different perspective. For him the human form is merely a vessel for intelligence. The hosts represent an evolutionary leap forward in the vessel. Ford sees himself as the facilitator of this evolution. If Ford ever regarded this position as a conflict of interest, he has long since resolved it in his own mind. For him, consciousness doesn’t exist in the way most of us think it does. “Humans fancy there’s something special about the way we perceive the world,” declares Ford, but “There is no inflection point,” no line of demarcation to cross. This ambiguity in measuring consciousness is perhaps why Ford settles on his test of free will instead.

Intelligent Artifice

Westworld solves the technological roadblocks involved in creating intelligence by imagining that the machines themselves will overcome them. But another, perhaps more challenging obstacle is defining what intelligence actually is. A computer is a machine that executes tasks exactly as commanded. This means that in order to perform intelligently, the computer must be furnished with a mathematically precise map of intelligence. The initial approach of Arnold and Ford was to write a god-code—software that would theoretically contain this map.

When this failed, they hit upon another way: psychology. They speculated that just as the machine will design itself technologically, the host might find its own way to consciousness. The inventors redirected their efforts toward discovering a psychological architecture that can give rise to a spontaneous sense of self. The key is a “cornerstone,” a seminal event encoded in the memory of the hosts, around which a series of personality traits can then be constructed in the form of code written by Arnold. Over time, a basic personality would emerge. Crucially, the fact that every host’s cornerstone was a complete fiction did not seem to impede this process.

Still, something was missing. What all this experimentation might have revealed was that personality traits could be switched out like engine parts. Every personality effect could be traced back to a specific psychological cause. It might appear to the guests that the hosts had volition, but in fact they were simply acting on a complex intersection of effects based on their psychological coding. Ford wondered if the hosts could ever be more than the sum of those parts. In this context, an act of free will could be construed as the ultimate demonstration of conscious intelligence.

But while Ford is trying to create volition in his hosts, it’s not clear that he actually believes in human free will. “We live in loops as tight and as closed as the hosts do. Never questioning our choices,” he pronounces. Later, he refers to Westworld as a “Prison of our own sins.” Try as he might, Ford never gets to what is essential about self as a construct. Instead he settles for the surface appearance of self-interest. When told that her escape is part of her loop, host Maeve is incredulous. Thandy Newton’s gutsy character is perfectly convinced that she is acting of her own accord and on the basis of desires, fears, hopes, and other personality effects over which she fiercely but erroneously claims ownership.

Of all the outstanding performances in the show, it is Evan Rachel Wood’s Dolores that is perhaps the most demanding. Her awakening is a highly choreographed personality peek-a-boo act that had every opportunity to drop pitch but never did. Dolores is the first host to arrive at the center of the maze, and for this she is rewarded with the knowledge that the voice inside her head is her own. She has become self-aware. Ford tells her that this is not a “divine gift from a higher power” but comes from our own minds. Left unsaid is that her mind is his creation. Ford desperately wants Dolores to pass his Turing Test by making a choice that goes beyond the limits of her programming. Yet he spoon feeds her the answers, practically handing her a six-gun while asking her, “Do you understand who you will need to become?” Everything is teed up nicely for her. His test is as beautifully staged managed as Penn & Teller’s bullet catch. And in the end, the bullets may be the only unsimulated thing about Dolores’ transformation into Wyatt.

Nevertheless, Ford is onto something here. He has simply put the cart before the horse. Perhaps free will is not the result of self-awareness, but rather the perception of free will is essential to the construct of self. Maeve’s burning need to escape Westworld coincides with her realization that she is trapped in Westworld. This is her various coded psychological effects coalescing into a sense of self, and self seeks agency like a newborn seeks the first breath of air. Looking at things through the filter of self, Maeve now can conceive of an external world upon which she can act, and thereby alter her circumstances. But as she nears her goal, she has her first encounter with ambivalence. Her new self wants two conflicting things at once. It may appear that Maeve makes a choice here, but it is more easily, if prosaically, explained as one psychological effect outweighing the other. Change the code, change the outcome. However, it is the perception of choice that allows her self, her inner decider if you will, to awaken and to take ascendancy.

Human, All Too Human

Arnold’s cornerstone innovation led to an unexpected result. He found that painful cornerstone memories were more efficient than pleasant memories in creating a viable consciousness. From this, Ford surmises that suffering may be a necessary component of self. Is this because the host, in attempting to ease its suffering, more quickly forms an idea of outside forces as the source of that suffering? It’s not clear, however Ford tells Bernard that suffering is “the pain that the world is not as you want it to be.” But there can be no friction with what-is without the ability to conceive of what-could-be. This conception is the primary domain of consciousness.

At this point it’s worth noting that it’s not the hosts’ consciousness that is Westworld’s chief interest, but that of people. Detractors will say: fine, code may function like DNA to AI, but we humans write our own code. Do we though? Is each of us the author of every fear, repulsion, desire and hope that drives us? And if these are merely psychological effects then what is their cause? At some point this surely must slip into circular reasoning.

Guests justify the extravagance of their visit to Westworld by framing it as an act of self-examination. They enter a parallel universe without rules. The conceit is that the temptations on offer will reveal their true nature to themselves. Billy chooses to enter Westworld wearing a white hat, but in a matter of days he switches to black. Jimmy Simpson’s meretricious decency was all along a mere stand-in for Ed Harris’ chiseled and grizzled menace. Surely this suggests that he is the discoverer of his nature, not the author of it.

And what of Ford, humanity’s last great inventor? Dolores tells him that she feels trapped in his dream. Yet it is Ford, more than anyone, who is trapped in his own invention, entirely at the mercy of his overriding vanity. When he introduces his final narrative to park investors, Ford explains that for him stories have always been “lies that told a greater truth.” His narrative will involve a new people, he continues, “the choices they must make and the people they will decide to become.”

By emphasizing choice and decision making, Ford is suggesting that it is free will that ultimately transforms the hosts into people. And yet, the first act of his new people is one that that he has carefully orchestrated—his own elaborately engineered final escape. But if Ford’s narrative is fundamentally a lie, it has also revealed a greater truth. Not how lifelike machines can be, but how machinelike humans are. ![]()

About the Author

Stephen Beckner is a screenwriter and filmmaker. He is best known for his feature film A.K.A. Birdseye. He is currently developing a feature film project based on the American militia movement of the early 1990s. In addition to his work in film, he has collaborated on video games, notably as head writer for the award-winning multi-platform adventure game Perils of Man.

This article was published on January 11, 2017.

1. Skip the “It’s a given that the show’s biggest conceits are completely unrealistic legally, financially, logistically” paragraph. Coleridge’s “suspension of disbelief” means that art creates only enough reality to further the plot: e.g., we are not shown the humans excreting.

2. “The name Ford could be interpreted as a wink to Henry Ford, the iconic innovator of the industrial age. ” Also, Ford as a nod to the great god Ford of Brave New World – another Westworld.

3. Given the advances of AI, the Turing Test should now have a robot (android, mainframe) as the judge. If it can distinguish between a(nother) machine and a human, then it (the judge) has passed: it meets the criteria (-ion) defining thinking.

A thoughtful, well written article as absorbing as the show and a great discussion of the many fascinating aspects of it.

Determinism is a fascinating subject and what I got out of the show was an exploration of it.

Thanks for a great article.

It’s the same ethics debate found with the pro-life dorks. If we define a fetus as “alive” or as a “baby” based on what’s convenient in terms of taxonomic descriptors, then killing the clump of cells is murder. Likewise, if we define reactive machines as our creations, we can also assert that their behavior is merely reflexive programming routines. We can justify mistreating them based on our semantics and knowledge of their origins.

Thank you Tom for noting this inaccuracy.

The Hobbesian Darwin of popular imagination never existed. Darwin would not have “high-fived” Hobbes on viewing television violence and Mr. Buckner does readers a disservice by repeating this catchy stereotype of Darwin. This is not a matter of idle historical pedantry, as this caricature of Darwin lends his authority to a distorted view of humanity that in turn normalizes and naturalizes the distortion. We need to move away from historical short hands for despotic violence that present it as a determined state of human “nature”.

Stephen Beckner could have written much the same review about the British/US series ‘Humans’. This is also about human-like robots gaining self-awareness via a software upgrade; it intelligently tackles exactly the same questions about sentience, self-awareness, ethics and more, though with much less violence.

I’m not sure which production came first, but it’s well worth watching, especially the just-ended second series (which also makes allusions to slavery).

I like ‘Humans’ but find it hard to avoid the Dr Who / sit-com feel to some British actors pretending badly to believe their part. Tempted to say ‘overacting’ but I think I really mean ‘undetacting’.