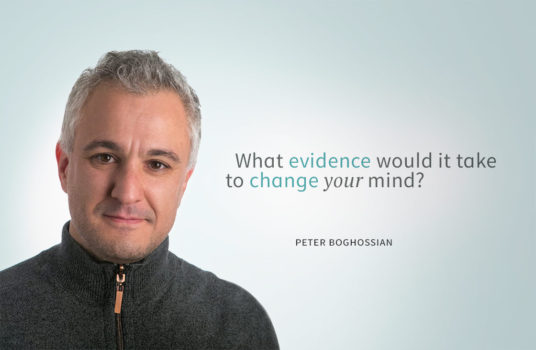

I’ve been writing about and teaching critical thinking for more than two decades. “Form beliefs on the basis of the evidence,” was my mantra, and I taught tens of thousands of students how to do just that. Why, then did people leave my classroom with the same preposterous beliefs as when they entered—from alternative medicine to alien abductions to Obama being a Muslim? Because I had been doing it wrong.

The problem is that everyone thinks they form their beliefs on the basis of evidence. That’s one of the issues, for example, with fake news. Whether it’s Facebook, Twitter, or just surfing Google, people read and share stories either that they want to believe or that comport with what they already believe—then they point to those stories as evidence for their beliefs. Beliefs are used as evidence for beliefs, with fake news just providing fodder.

Teaching people to formulate beliefs on the basis of evidence may, ironically, trap them in false views of reality. Doing so increases their confidence in the truth of a belief because they think they’re believing as good critical thinkers would, but they’re actually digging themselves into a cognitive sinkhole. The more intelligent one is, the deeper the hole. As Michael Shermer famously stated, “Smarter people are better at rationalizing bad ideas.” That is, smarter people are better at making inferences and using data to support their belief, independent of the truth of that belief.

What, then, can we skeptics do? Here’s my recommendation: Instead of telling people to form beliefs on the basis of evidence, encourage them to seek out something, anything, that could potentially undermine their confidence in a particular belief. (Not something that will, but something that could. Phrased this way it’s less threatening.) This makes thinking critical.

Here’s an example of how to accomplish that: Jessica believes Obama is a Muslim. Ask her, on a scale from 1–10, how confident she is in that belief. Once she’s articulated a number, say 9, ask her what evidence she could encounter that would undermine her confidence. For example, what would it take to lower her confidence from 9 to 8, or even 6? Ask her a few questions to help her clarify her thoughts, and then invite her to seek out that evidence.

Philosophers call this process “defeasibility”. Defeasibility basically refers to whether or not a belief is revisable. For example, as Muslims don’t drink alcohol, perhaps a picture of Obama drinking beer would lower her confidence from 9 to 8, or maybe videos over the last eight years of Obama praying at Saint John’s Church in DC would be more effective, lowering her confidence to a 6. Or maybe these wouldn’t budge her confidence. Maybe she’d have well-rehearsed, uncritical responses to these challenges.

This is exactly what happened in my Science and Pseudoscience class at Portland State University. A student insisted Obama was a Muslim. When I displayed a series of pictures of Obama drinking beer on the projector, he instantly and emphatically responded,“Those pictures are photoshopped!” I asked him, on a scale of 1–10, how sure he was. He responded 9.9. I then asked him if he’d like to write an extra-credit paper detailing how the claim that the pictures were photoshopped could be false.

This strategy is effective because asking the question, “What evidence would it take to change your mind?” creates openings or spaces in someone’s belief where they challenge themselves to reflect upon whether or not their confidence in a belief is justified. You’re not telling them anything. You’re simply asking questions. And every time you ask it’s another opportunity for people to reevaluate and revise their beliefs. Every claim can be viewed as such, an opportunity to habituate people to seek disconfirming evidence.

If we don’t place defeasibility front and center, we’re jeopardizing peoples’ epistemic situation by unwittingly helping them artificially inflate the confidence they place in their beliefs. We’re creating less humility because they’re convincing themselves they’re responsible believers and thus that their beliefs are more likely to be true. That’s the pedagogical solution. It’s the easy part.

The more difficult part is publicly saying, “I don’t know” when we’re asked a question and don’t know the answer. And more difficult still, admitting “I was wrong” when we make a mistake. These are skills worth practicing.

Critical thinking begins with the assumption that our beliefs could be in error, and if they are, that we will revise them accordingly. This is what it means to be humble. Contributing to a culture where humility is the norm begins with us. We can’t expect people to become critical thinkers until we admit our own beliefs or reasoning processes are sometimes wrong, and that there are some questions, particularly in our specialties, that we don’t know how to answer. Doing so should help people become better critical thinkers, far more than 1000 repetitions of “form beliefs on the basis of evidence” ever could.

About the Author

Peter Boghossian is an Assistant Professor of Philosophy at Portland State University and an affiliated faculty member at Oregon Health Science University in the Division of General Internal Medicine. His popular pieces can be found in Scientific American, Time, the Philosopher’s Magazine, and elsewhere. Follow Peter on Twitter @peterboghossian.

This article was published on April 19, 2017.

One of the best things we can do to help people change their minds is to make it clear they don’t have to say “I was wrong.” There are better, more poised and diplomatic ways to respect your adversary who thinks they might want to change their opinion on something. “I’m re-evaluating that possibility.” “I see the potential in that option.” “Interesting new information.” And my all time favorite, taught to me by one of the best bosses I ever had: “I just wasn’t entirely right.” Once we’ve convinced someone to change their mind, a little tact can go a long way.

A perfect example of believing something despite evidence to the contrary is climate change (used to be global warming but it stopped warming so they changed the name) and climate change is supposed to cause both droughts and floods, and heat and cold, all due to a slight increase of a trace gas that is essential to life. The Left does not want to control the weather, they want to control YOU.

No, it did not stop warming. “Climate change” became the term of choice because deniers insisted it was a better descriptor than global warming, which they declared alarmist.

Climate scientists are not saying that warming is causing every drought, storm, etc. and they are not even saying that CO2 is certainly the cause of it – they just give it a high probability. But like most people leftist non-scientists have difficulty with probability and they tend to “believe” in the greenhouse theory, and that unusual weather must be due to it.

How certain do you think Shermer is about the laws of motion? Ten out of ten I’d say – which makes it odd that since he knows that Building 7 came down into itself at free fall acceleration for 2.25 seconds, and he knows that this MUST mean another energy source other than the building’s own potential gravitational energy MUST have been removing the support, he gives his name and his big laughing face in support of all sorts of stuff in the media that claims just the opposite of what he knows to be true.

One should be extremely sceptical about any of these politically motivated skeptics!

No, building 7 did not come down at freefall.

As children, we are “carefully taught” the things our parents and relatives want us to know. We listen, we learn, we see that there are advantages to fear and greed that we may be able to use to achieve a successful life. Sometimes, we actually understand what our family and others are telling us although we may never be sure that we are not being “conned” in some way. So as we mature, we reach the tender age of 17 and, we find ourselves bored and miserable and are confused. Our parents, our ministers, our teachers and some guy on television is telling us how to do our s*-#! So by this time we are already unable to do any critical thinking about the world and all the belief propaganda that has been rained down upon us. So I must ask the question: “How in the world is it even remotely possible to sort things out and try to see the ‘truth’ of any statement another person presents to us and says he knows what is good for us?” Here again we see the absurdity of our existence with the billions and billions of other peoples ideas forced at us through the powers that be!

Our lives are a steady stream of random thoughts that run through our minds every day. We are manipulated by forces that just want to use and abuse us for their very own greed. And today we are now subjected to infinite numbers of digital and analog ads and images whenever we want to expose our minds.

Critical thinking is really just a way to try and solve our daily problems using reasoning that has been corrupted by uncritical “vampires” and “devils” whose only motive is Profit and Destruction of values.

So, try as we might, we will all be pretty unsuccessful in our daily attempts to reach any kind of a rational result, whether we are seventeen or seventy. Other people will not let us change their minds or their personal life’s model built by previous propaganda.

The easy way out, since we don’t have free will, is to use what seems most likely when confronted with more than one fact on any given subject. One can operate on what seems most likely without having to believe a thing.

Most people know about the way in which people in earlier times believed in the Earth-centered universe and the fact that it was based on Biblical writing. The more that it failed to conform to observed data, the more complicated it became. Many people suffered and died before it was finally conceded that the Sun was the center of our solar system and things became far more simple.

As a child in Sunday School I was told many incredible stories which I accepted as fairy tales, the same of the bedtime stories that I was read. To believe the literal truth of those stories tests the belief system of a child. I accept them as allegories intended to express deeper truths.

We also have cultural and linguistage baggage that we may have to examine before we can call ourselves genuine thinkers. Anybody who has ever studied a foreign language knows that there are many different ways of expressing an idea. Indoctrination by parents, schools and the media can lead a person down a false road from which we may never truly escape. It is well to remember that even the most basic of our beliefs may be wrong.

I am not as critical of Peter Boghossian’s article as some have been here, but with almost all of the comments so far I feel a lurking sadness about this subject. Everything we encounter in this brief life is extraordinary in the sense that nothing we touch, see, or believe to be here, real, or true makes sense VS the evidence. Physics and the “uncertainty principle” is the big one for me. Even the current growing popularity of the idea that we are simulations needs an origin same as does the Big Bang result.

As I grew older I began to find that trying to convert religious believers is fun, not an obligation. A little sad, however, when religious dogma has such a large role in how the laws of our planet are formulated by legislators who, in democracies, are salesmen and not thinkers or leaders. Myself, I did not chose my parents while existing as a soul floating around observing young couples having (and possibly even enjoying) unprotected sex. I can be happy who I am and where I was born but not proud. (Ask a Somalian). I am not guilty re how much time I do not spend on enlightening the hopelessly blind majority, but as old as I am now I still find dueling with believers an amusement, but always with the lingering aforementioned sad aspect to it all.

At some stage of my life I worked as policy adviser for the Federal Government of Australia. If there is a job opening in the Australian public service, they formulate a set of selection criteria – usually about ten – and every applicant has to write a statement how he meets each of the individual criteria. It’s a decent attempt at a fair and transparent selection process.

Anyhow, there is a criterion that is used only for the senior executive service, ie the top people running a department. It’s the “ability to cope with ambiguity and uncertainty”.

Apparently the Australians consider that to be an important skill to have for a top level position in their public service, but they don’t expect everybody to have it!

As a teacher I NEVER challenged people’s beliefs. That only makes them defensive and less likely to take in new information.

I just tell them that they need to know the theories and facts, and they must not dispute these theories and facts in exams. They MAY save their souls by qualifying their answer by say ‘scientist believe’ or ‘current scientific theory’.

It is a bit like sneaking knowledge and understanding in the back door.

To Kim and those attempting to help her: to help mitigate your heartburn, get yourself a copy of Sarah Knight’s “The Life-Changing Magic of Not Giving a F*uck.”

This article suggest the reason why I have been banging my head against a brick wall. I am looking at a possible “outside the box” model of the evolution on human intelligence, based on an early “human friendly” computer model that failed to gain support because was incompatible with the 1970’s AI experts. I am busy hitting problems in convincing the “experts” because most seem to hold at least 2 of the following deeply held beliefs :

(1) The stored program model of computing must be the answer because (a) look how COMMERCIALLY successful it is and (b) Turing’s approach described a UNIVERSAL machine – so it must be the only possible model – forgetting that computer were initially designed to do mathematical tasks that humans were bad at, and no-one seems to have ever set out to go back to first principles approach. REJECT IDEA as of course the foundations of computing must be sound because computers make so much money, etc., etc.

(2) Human brains are obviously significantly better are processing information than animals. OK they are bigger and may be super-charged – but it must have evolved, and my model shows that at the neuron level we share exactly the same genetically-controlled decision making which is so crude that we do the best to hide it with generations of meme learning embedded in language. REJECT IDEA – as our brains must be innately mathematically logical at a sophisticated level.

(3) Neural nets take so long to learn in depth that out intelligence may be due to something special in how the brain works (a kind of sophisticated “Philosopher’s Net” for those who do not say “God did it”) – but the model I am working on shows how neural net knowledge could be quickly transferred from parents to child – resulting in a combined meme neural net perhaps 4,000 levels deep since language started to support “speed learning” REJECT IDEA as modern AI neural net research works on trying to model from the bottom up using very powerful systems and raw big data – so a simple neural net model is ridiculous.

@Gregory Dearth — I completely agree. At the same time, i tap dance.

I taught the triangle of fire this weekend to a bunch of kids. The teaching method involved saying “Over there are piles of wood. There is little wood. There is littler wood and there is really really little wood. We also have big wood. Please do not use the big wood in its current state. If you want to use big wood, we have an axe yard to make littler wood from the big wood. Here is a package with other things that might be useful to start a fire.”

They started making fire.

The game was rigged that day though. The wood we had was of the type that made not making fire sort of hard.

There is a feeling involved in making fire. There is science. When I build a fire though, I don’t usually have micrometers to measure the distance between and the size of my kindling and tinder. I have a pretty good idea based on past experience what will work and what won’t work.

There is an emotional aspect to that knowledge. That emotion came from experience. The experience is not perfect though.

Teaching these scouts has taught me a few things.

1. If you can’t start a fire with 2 matches, starting it with 200 will have a very good chance of not succeeding also.

2. A badly set up fire still starts if you have miracle wood on hand — and dry kindling.

3. Even with miracle wood, it is possible to not get a fire to start.

The first step is success in starting a fire.

The first step can also be failure at starting a fire.

The triangle of fire is actually a tetrahedron.

The first three sides are easy to explain. They are not always easy to internalize. It is possible to be an expert at starting fires and still miss out on obvious aspects of the science involved. It is definitely possible to be an expert at the science and be an absolute moron when it comes to starting fires.

Science is wonderful. Practical application of science is magnificent. Standing by science as an authority — COMPLETELY MISSING THE POINT.

BTW I am not suggesting that Gregory Dearth is missing the point. I am suggesting that those that throw “consensus” around without understanding the origins of the “consensus” are missing the point. If you need consensus, the science is absolutely not settled.

I was in a pickle awhile back. I had a neighbor attempting to slice my wife and kids away from me. He had worked things pretty smoothly. Unraveling it was complicated. One day, as I was walking around the lake near my house, partly to control the nervous energy I had as a result of the challenge my neighbor presented. As I was passing a driveway, a tiny white dog came running down the driveway barking at me.

His owner heard the yaps and their approximate location (on a well travelled road) and came out to collect the dog. The dog belonged to someone I knew. We exchanged pleasantries… jobs, weather, family, and I headed back on my walk. He was the brother of my neighbor that was causing me fits.

A week and a half later, the fan was suddenly full of excrement put there by my neighbor. I picked up the phone and called the brother and asked said “Your brother is doing some really weird things that I do not quite understand, can you help me understand?”

He listened. He said “I am very sorry, please hangup and go get a restraining order on my brother. When he moved in next to you, he was coming from Walla Walla. He was in Walla Walla because he beat up a woman and kidnapped her. What you describe is exactly what happened to that lady.”

I learned a lot about restraining orders that week.

Had that dog not run down the driveway and sort of forced me to talk to the brother, I might not have had the inclination to call him. I bought that dog some treats.

I do not believe the supernatural was involved. It was likely all random. It is possible that I took the opportunity of that dog coming out of a house I knew belonged to the brother of my neighbor to connect gently.

I cannot completely throw away the possibility that some higher order touched the dog at just the right time to cause him to come yapping at me. I cannot completely eliminate it. To completely eliminate it without a lot of evidence to eliminate is just as wrong as assuming that was what happened.

“What, then, can we skeptics do? Here’s my recommendation: Instead of telling people to form beliefs on the basis of evidence, encourage them to seek out something, anything, that could potentially undermine their confidence in a particular belief. (Not something that will, but something that could. Phrased this way it’s less threatening.) This makes thinking critical.”

Wrong. This programs people to shift the burden of proof. Evidence isn’t just anecdotal feelings and visualizations nobody else can verify. Evidence for existential claims are objectively demonstrable. Teaching people how to tell if they have SUFFICIENT evidence for a claim is key, as is the whole point of objective verificiation via demonstration.

Teaching people to look for how to falsify their beliefs only works for falsifiable beliefs. It is also misunderstood by the incredulous to mean someone else needs to prove them FALSE or the are justified to believe.

This nonsense article dodges the actual problem of irrational belief (the absence of evidence proportional to that belief). If people better understood that extraordinary claims require extraordinary evidence (not mere feelings), less people would believe insufficiently supported or unsupported claims. If more people understood that existential claims reuires demonstrble evidence, not mere testimony, less would believe claims based on popularity.

If more people understood three basic logical fallacies, hardly anyone would believe any apologist (big foot promoters, creationists, etc).

1. argument from ignorance/personal incredulity

2. argument from popularity/age

3. argument from analogy or equivocation

If people understood WHY these are logical falacies, they wouldn’t suffer them when trying to merely justify their bleifs, and would be less likely to believe in the incredulous nonsense in the first place.

That is what makes a difference. Shifting the burden of proof (the error an incredulous believer would make if you asked them to falsify their belief), is just another logical fallacy they already commit. They don’t need your encouragement to look for ways to prove themselves WRONG if they lack a sufficient foundtion of evidence to justify belief in the first place.

For example, a magical invisible fairy is who created the universe with a big bang event we know happened. HOW could you falsify that belief? Null hypothesis, anyone?

NO. Teach people to be apistevist rational skeptics and the rest will take care of itself.

I would be willing to abandon my dis-belief in the supernatural, were there any positive evidence that it exists — evidence of the type that could not appear except by exclusively supernatural cause. For example, the prayerful supernatural might be claimed to have been in evidence by understandably grateful individuals who escape the destruction of a burning building — but natural cause can invariably also explain such narrow escapes. Were, to further the example, the fires to have freeze-framed and their heat suddenly vanish in defiance of natural physics, like a film special effect, allowing trapped victims to exit around the frozen flames before the conflagration resumed once everyone was safe, I may have to revisit my position on the intervention of supernatural forces. Such an occurrence sounds extreme and absurd to even suggest — but rightfully so, as it is the essence of an irrational belief in the supernatural that is never fully confronted by those who hold it.

Mr. BOGHOSSIAN (caps are because I cheated and copy and pasted an am too lazy to change that, but am not too lazy to type this extended reason).

I completely agree with your line of attack. I have a warning for you and everyone else attempting it.

https://youtu.be/UBVV8pch1dM

The two parts of the brain (as depicted in the video) represent fast and slow thinking. Fast guy gets things done almost instantaneous based on what is happening around him. Slow guy deliberates meaningfully through the problem. Fast guy does things well most of the time. He gets things done. NOW! Slow guy doesn’t get things done fast.

Skeptics face a challenge. This challenge is bidirectional. Anyone thinking this challenge does not affect them is deluding themselves. When we are attempting to teach critical thinking, what we are doing is attempting to kick the slow thinker off the couch and get him thinking.

THIS IS A VERY DANGEROUS activity. Fast thinker is not just a guy who gets things done. He is a guy who guards the time of the slow thinker. He is vicious about it. Attempt to get that guy on the couch moving and you risk ending up on the ground with a broken tooth.

I have experienced this response from the most sensible people in the world.

ANY attempt to get past the guard is likely to be met with vicious comments. There are some folks, very few and very far between who will stop and think. The more the folks believe they have perfectly thought through something critically, the greater the likelihood of trigger a vicious response.

It happens to me every day from the other direction. People try to get my slow thinker off the couch. Part of my brain rises up to respond. I don’t always keep it contained properly.

The more we think about things critically the more certain we become. Overcertainty is one of the great problems facing our scientific establishment today. I have to remind myself of how many smokers are walking around not dead every time I start becoming certain of something.

I am a big fan of numberwatch.co.uk and wmbriggs.com . Two gentlemen who see the other side of the certainty chain and are waving their arms trying to get those in power to stop being idiots.

For me the really hard part is recognizing that all those folks who scream back at me that I am a denier are absolutely correct to do so. We do not have time to play “Dancing Wu Li Master” and start over again every second. What I learned from Dancing Wu Li Master though was that we have to start over again all the time. There is no way to master what is happening without always starting from the beginning. I have to hold on gently to the things I am confident of. I can’t hold so tightly that I do not move.

Following your Twitter feed…..

What should the punishment be for being a police officer?

I play golf with a couple of guys who are in frequent email contact with one another on this topic – one an atheist who enjoys pointing out all the many wrongs that priests have done, the other a committed Christian who would love to convert the other guy to his faith.

Why do these two believers (for atheism is a form of belief, too) feel the need to convert each other? Why do adherents to any religion, or none, want everyone else to accept their received ideas? My simplistic answer is, because they all know that they don’t have the final answer they are seeking but, if everyone else holds their belief, it will make it far more robust.

Our consciousness gives us the double-edged sword of intelligently understanding our own mortality but not comprehending how it can be true that we will shortly cease to exist (in human form, some would say). We hold this conceit despite our certain knowledge that we did not exist in any form 100 years ago but find it hard to handle the idea that we will not exist in 100 years time.

So, if we have decided on our own way of getting through this life without despairing at its eventual end, can we not let others decide on their own different way to accomplish the same objective without questioning the route they are taking to get there?

You don’t get those conversations playing tennis!

“Why do these two believers (for atheism is a form of belief, too) feel the need to convert each other? Why do adherents to any religion, or none, want everyone else to accept their received ideas?”

I think it is just human nature!

Life is about problem solving. We may come up with an idea for a solution to a problem. Our brain gives a little squirt of endorphins when somebody agrees that our idea is a good one, i.e. likely to solve the problem.

Religious questions are just another set of problems.

Actually, Atheism is not a belief (no matter how many people may call themselves Atheists). Atheism is the name of a concept referring to non-belief; a belief that is not, nor actually has a true definition – it is simply an empty set.

There is no argument for or against Atheism. But, making silly, ignorant, unsubstantionable arguments pertaining to presumed factualities in relation to religious or political beliefs, well that is, sadly, a rather large, swampy field.

Atheism may or may not be a form of belief – it is only if you “believe”, that is take it for a certainty, that there are no gods. A more scientific attitude is agnosticism, which is admission of lack of certainty. Of course when there are thousands of religions which all claim to be the only true one something can be said about the probability of any particular one.

Most people do not come to atheism or agnosticism through forced indoctrination, although falling in with a different social set or culture can be important, as it is for change of religion.

Kim, First, do the research on how and why the King James version of the Bible came into existence. The historical information and documentation are easily available. Armed with this material, difficult questions can then be asked pertaining to Jeff’s belief that the Bible was literally written by God. That said, Jeff may be scared out of his pants, for reasons stemming from his childhood, to challenge his religious beliefs. If so, you have a classic case of blind belief and no amount of reasoning or evidence will make it safe enough for him to change. Roger

This is a great idea, and I agree. I have been arguing on Facebook for years with this christian man, Jeff. He already does this very thing, seeking out the evidence that could disprove his beliefs. He has learned about (he is not a scientist) the scientific evidence that demonstrates that the bible is incorrect in its assertions (creationism vs evolution, Noah’s ark is impossible and never happened, plate tectonics, etc.). Yet he still clings to his beliefs that there is a god and the bible is His Word. I don’t know what more to say to Jeff. It is as if he uses all of the contradictory evidence, whether science facts, lists of inconsistencies and contradictions in the bible to show that only men wrote it, logical reasoning, etc etc to strengthen his faith even more. He is quite intelligent. He has no problem with talking to atheists (like me, and some of his friends are atheists with a science background). I see him as a wannabe apologist. He already knows all of the objections, the counterarguments that the other side of the debate will use. I pose questions. He simply ignores them. If your interlocutor does not even attempt to answer the questions, there can be no honest conversation. What now?

Sometimes you just need to be satisfied with planting seeds, some of which may grow later.

Time to shift the subject to the foundations of epistemology instead. Explain why his lack of answers are dishonest dodges. Explain the principle of objective versus subjective experience. Explain Hitchen’s razor. Explain how extraordinary claims require extraordinary evidence using an analogy like the invisible dragon in your neighbors garrage. Give him testimony from others regarding wild claims beside religion. Ask him why he doesn’t believe them.

You need to get him out of his psychologically protected zone. He is highly indoctrinated so he won’t adapt or question anything in that god box. So you have to move the conversation to something outside that box and attempt to then circle back to that box (every now and then) if you get any agrement from him on the more general subjects and concepts.

What now? Accept people the way they are, especially if they have the courtesy to do the same for you (even if you are an atheist). What’s important is how people lead a kind, good, helpful life, and not the worldview/religion that justifies that life to themselves.

Kim, you need to recognize that your friend will not respond to rational thought as long has he is a prisoner of his fear and greed, the two great human motivators. His lifetime of religious indoctrination has manipulated these two powerful motivators to the point that he will not change his beliefs until he, himself, can come to grips with his fear and greed. What fear? Fear of non-existence; fear that death is really, truly final. He knows that is where you are leading him. He is in denial of the finality of death and clings to a belief in the invisible, immaterial, immortal human soul that his religious indoctrination has drilled into him. That, along with the carrot that religion offers, namely, everlasting life with attending pleasures, the ultimate manifestation of human greed. Your only hope is to plant seeds like this into his consciousness and hope that he can take it from there and eventually overcome his debilitating fear and greed.

WTF is tyhis doing on yourt site? http://www.skeptic.com/downloads/top-10-evolution-myths.pdf

That’s just a misleading headline…..perhaps to keep us in the practice of reading the full article?

“Skepticism is not cynicism or denial; it is the state of mind that does not agree quickly, that does not accept or take things for granted. A mind that accepts is seeking, not enlightenment or wisdom, but refuge.” J. Krishnamurti https://theendlessfurther.com/tag/krishnamurti/

I see it differently. Skepticism = uncertainty.

We human don’t seem to like that.

We need to know how things work so that we can have (at least an impression of) control over things.

It takes quite a lot of mental strength and balance to accept uncertainty and move forward. Not everyone has that and in many cases you really can’t do much about it.

Take a person that is looking for more stability = certainty. Teaching him to cross sources and check facts will not work in the direction you expect because the basic hypothesis that he is seeking the truth is wrong : he is only seeking more stability.

So maybe the first thing to do is to explain people that seeking the truth is painful. It means trying to break the foundations of your mind all the time. So many times, scientists had to throw away theories they built during their entire lifetime and many couldn’t.

As an illustration, take the movie “Shutter Island”. During that movie, you build a story in your mind brick after brick, just to have it completely ruined at the end. I found the movie impressive, but it left me with a weird, very displeasant feeling at the end, that I had a hard time explaining.

So progressing towards truth means being trained at this uncomfortable feeling : the impression of having wasted your time, done harm (convincing others of wrong things), taken bad decisions … and still move forward.

I partly disagree with you. I think that all people by nature do seek truth. We just need to remove obstacles.

And what would show you that you are wrong on this? My experience with students and some policy wonks has shown that no evidence is relevant. In what way is Donald Trump seeking the truth? He knows it — it is what he believes — and any search for evidence is irrelevant. Unfortunately, there are many others like him.

Dfg says:

“I see it differently. Skepticism = uncertainty.

We human don’t seem to like that.”

Interesting comment, Dfg. I recently organized an art/science exhibition called UNCERTAINTY. The exhibit essay expands on the theme of your thoughts, and is linked at http://stephennowlin.academia.edu .

OK. I taught math in a religious college, finding students only slightly more willing to self-challenge, which increased my motivation to push for truth. My motivation to talk to a true believer evaporates when she seems to choose to ignore good rules of evidence, regurgitate dogma, and abandon curiosity. Challenges to a believer’s interpretation of the universe can be quite negatively emotional to the challenger, let alone the deluded. In standard “emotional rebound” mode, the believer reacts with self-justification. This does not help. Asking the believer what evidence it would take to change beliefs presupposes curiosity, understanding evidence in context, clarity in how the mind works, a willingness to believe not scientists but in the scientific method, and probably commit thought crimes against god. Believers want to fit in and be comfortable, and in my experience challenging them to skepticism fails, since they see ahead to a consequential life-change if what they know is wrong, and that ain’t gonna happen none round here. Generally, believers misapprehend the need for truth.

A good place to start is to assume that all persons by nature would prefer to have true beliefs rather than false beliefs. Evolution has equipped us with an innate love of truth. Sure, other things can interfere with or sidetrack this pursuit of truth, but we should always keep in mind the basic assumption.

The simple answer:

Conjectures and Refutations.

Nothing seems as overrated as the belief in and the perceived need for simple causalities.

What would it take for you to change your mind about those “believers”? :)

Craig,

Well said and to the point. I believe that the question of religion is the poster-child for what you are describing, with the term “faith” having no basis of justification and actually being better served by “wish.” Many other “philosophical” beliefs are similar and similarly hard to modify.