During the media blitz over the potential threat now posed by artificial intelligence, I asked OpenAI’s ChatGPT “Is the technological singularity coming?” Here is the response:

ChatGPT: The coming technological singularity is a hypothetical scenario in which artificial intelligence surpasses human intelligence and becomes capable of self-improvement and innovation at an exponential rate. Some experts predict that this could happen by the end of this decade, while others are more skeptical. The implications of such a radical transformation are unknown and potentially unpredictable, posing both opportunities and challenges for humanity. Some possible outcomes include the emergence of a post-human era, the extinction of biological life, or the coexistence of diverse forms of intelligence.

There is no doubt that ChatGPT is an excellent tool, and now OpenAI has produced a next generation AI called GPT-4 (Generative Pre-trained Transformer 4). GPT-4 is astonishing in the sense that it can eloquently respond to questions, generate digital images from natural language descriptions, and analyze data. According to OpenAI, GPT-4 “exhibits human-level performance on various professional and academic benchmarks.”

In response to that high level of performance, a number of highly intelligent people have suggested that this means we have reached the singularity moment, or at the very least that this level of AI passes the Turing Test of artificial intelligence, achieving human-level natural intelligence. Some, most notably Elon Musk, Steve Wozniak, Andrew Yang, and Yuval Noah Harari, went even further and issued an open letter1 calling on “all labs to immediately pause for at least 6 months the training of AI systems more powerful than GPT-4… Powerful AI systems should be developed only once we are confident that their effects will be positive and their risks will be manageable.” The statement even called for the involvement of government and politicians if AI companies do not voluntarily pause. In response, the U.S. House of Representatives Science Committee invited AI companies to respond to questions about the technology and related risks.

This letter is not the first time public figures or researchers have warned us. In 2005, futurist Ray Kurzweil in his book The Singularity is Near predicted it would occur by 2045. Elon Musk recently predicted that machines would overtake us by 2025. Sam Harris was so vexed by the future progress of artificial intelligence that he declared himself to be certain AI would eventually destroy us.2 He opined that AI may not intentionally do so, but rather might eliminate us the way we might annihilate ants during, say, the construction of a building. Google engineer Blake Lemoine famously claimed that the Google chatbot with which he was conversing was sentient.3 (In response, Google fired him, which was probably the wrong thing to do as it only generated conspiracy theories.)

Were those warnings of impending doom not enough, artificial intelligence researcher Eliezer Yudkowsky wrote an opinion editorial for Time magazine in which he argued that the open letter did not go far enough:

Many researchers steeped in these issues, including myself, expect that the most likely result of building a superhumanly smart AI, under anything remotely like the current circumstances, is that literally everyone on Earth will die. Not as in “maybe possibly some remote chance,” but as in “that is the obvious thing that would happen… Shut it all down.”4

One of the research papers cited in the open letter was released by Microsoft.5 I am simply astonished by what GPT-4 can accomplish based on the tests the Microsoft team conducted over a period of six months. Here is a sampling of what is detailed in the paper:

- GPT-4 can generate 2D or 3D images from a detailed text prompt.

- When GPT-4 was asked to produce a short tune, the tune had a melody and a repetitive rhythm (however, GPT-4 did not seem to understand harmony).

- GPT-4 can write software code at a human level from text prompts. “GPT-4 could potentially be hired as a software engineer.” (I have a friend who is a senior-level computer engineer. He has used GPT-4 to write sophisticated code.)

- GPT-4 can create complex 3D video games.

- GPT-4 was able to produce a correct proof from a question asked in the 2022 International Mathematical Olympiad (top high school students from all over the world compete to solve questions in a set period of time—some students are unable to answer some of the questions).

- GPT-4 can solve Fermi questions. These are physics questions where the answer must be estimated because the quantity is difficult or even impossible to measure directly. For example: How many times does the average person’s heart beat in their lifetime?

- GPT-4 can function as a personal assistant. “GPT-4 uses the available APIs [application programming interface] to retrieve information about the user’s calendar, coordinate with other people over email, book the dinner, and message the user with the details.”

- GPT-4 “is able to use the tools with very minimal instruction and no demonstrations, and then make use of the output appropriately [e.g., it knows to use a calculator when needed].” (They continue by stating that it is an emergent capability.)

- GPT-4 can serve as a virtual real world problem solver. “GPT-4 guides the human to find and fix a water leak and recommends the exact actions that the human took (after replacing the seal the leak was gone).”

Figure 1. The Sally-Anne false-belief test (adapted from Bubeck et al., 2023).

The Microsoft team attempted to ascertain whether GPT-4 had a theory of mind—the recognition that sentient beings have thoughts, emotions, intentions, etc. It not only includes understanding the state of someone else’s mind, but “of reacting on someone’s reaction of someone else’s mental state.” The Sally-Anne false-belief test from psychology (see Figure 1, above) was one of a number of tests given to GPT-4. The researchers concluded:

Our findings suggest that GPT-4 has a very advanced level of theory of mind. While ChatGPT also does well on the basic tests, it seems that GPT-4 has more nuance and is able to reason better about multiple actors, and how various actions might impact their mental states, especially on more realistic scenarios.

How Deep Learning Works

One major goal during the Cold War of the 1960s was to develop software for the intelligence community that could translate Russian into English. Many millions of dollars were spent trying to achieve what appeared to be a reasonable goal. In his book Human Compatible: Artificial Intelligence and the Problem of Control computer scientist Stuart Russell points out that the early AI bubble burst when the incipient machine translations did not live up to expectations. Not only were computers not powerful enough, but the programming attempted to create a massive number of linguistic logic rules. Anyone who has tried to learn another language knows the problems encountered through direct word-for-word translations. Subtle and changing nuances become very important. No simple programming rules work for all the different sentences encountered. “Inflexible robotic rules” are not up to the task.

As computers became capable of storing and processing massive amounts of data and the Internet gave access to extensive sources of information, machine learning came to the rescue. Machine learning is simply a method of scanning the available data to learn. “Learn” is a tricky word and recalls, as an example, how we might cull through a book to understand how calculus uses limits. However, the machine is not learning in the way we do. Take translation as an example. In the early 2000s, statistical machine translation (SMT) was developed where computers analyzed millions of translated words, phrases, and sentences to find statistical patterns on how unknown text should be translated using similar structures. The statistical approach ranked the output translations and chose the best fit. The results were acceptable and sometimes excellent. Nonetheless, the computer had no idea what a word was. It simply used statistics to produce output that best fit the models.

In 2016, Google switched to neural machine translation (NMT) stating: “this change addressed the need for few engineering and design choices while increasing accuracy and speed.”6 NMT is quite different from SMT, because it does not use a system based on phrases mapped to the targeted language. The neural network handles an entire sentence as it moves through the system. The artificial neural network (ANN) loosely resembles a human brain in the sense that there are interconnected nodes, just as a brain has neurons and synapses. (It should be noted that neural networks only resemble brains. We still possess very little idea of how the actual brain works.)

If all this sounds complicated, here’s the bottom line: there are hidden layers in this training process and software engineers cannot be certain what specifically goes on while it happens. It is not surprising that many refer to the process as a black box.

The Contrarian View

In his 2023 book on the threat of AI, Smart Until It’s Dumb: Why Artificial Intelligence Keeps Making Epic Mistakes (And Why the AI Bubble Will Burst), AI engineer Emmanuel Maggiori used an example of a startup company developing a robot that “walks” around a city. Think of the process a human goes through when crossing a street. In a split second we rationally make choices:

- “If there are no cars on the street, cross over.”

- “If the closest car is far away and driving slowly, cross over.”

- “If the pedestrian crosswalk signal is red, wait.”

Translating these “if” statements into the hypothetical robot program for crossing a road might look like something like:

If distance to closest car on road < 100 feet, then wait; otherwise, if speed of closest car on road < 20 mph, then cross over; otherwise, wait.

The inputs are run through a series of “if-otherwise” conditions to reach decisions. What machine learning did to enhance the logic was dispense with thousands of rules by replacing them with a generalized template containing blanks (shown as italicized words below):

If some input < some number, then some recommendation; otherwise, if some input < some number, then some recommendation; otherwise, some recommendation.

Table 1. An idealized dataset to fill in the blanks of a hypothesized robot program (after Maggiori).

The copious “if-otherwise” rules dissipate, allowing the computer to fill in the blanks automatically. And where does the computer get the information to fill in the blanks? A dataset (Table 1, above).

In a real-time setting, it would require a massive database with thousands of rows and many columns allowing the computer to fill in the blanks. Maggiori put it this way:

By trying many input/number/recommendation combinations in a systematic way, the computer identifies the most promising ones and fills in the blanks in the template with them. This is called training or learning.

In more advanced AI, such as deep learning, the principles remain the same, though the templates may vary a little. For instance, in neural networks, the templates contain rules of the type “If weighted sum of inputs > some value” instead of the “If some input < some value” from the example above. In the more advanced deep learning, which uses neural networks, the template consists of millions of “If weighted sum of inputs > some value” operations. These are organized in a special, problem-specific way in order to help the system learn useful data manipulations such as image filtering or, in the case of GPT-4, word transformation and contextualization.

There are hidden layers in this training process and software engineers cannot be certain what specifically goes on while it happens. Many refer to the process as a black box.

Although the programs can be quite complex in the ways in which they are constructed and interact, the clear takeaway, according to Maggiori, is that the computer has no freedom outside of the templates/programs. The principle behind machine learning is exceedingly simple.

Then there is reinforcement learning, a variant of machine learning in which the computer generates its own dataset, as in Table 1, by experimenting with random decisions and statistically analyzing the results. For example, Google began its autonomous vehicle work in 2009, generating massive databases as its cars (with human drivers to assure safety) clocked thousands of hours to “learn” the rules of the road. Maggiori emphasizes that these programs are still governed by guardrails; that is, they are “governed by human assumptions.”

Claims by many AI researchers that machines “teach themselves” are grossly exaggerated. The machine’s ability to learn is limited to the parameters and data available through human input. Take, for example, what AlphaZero (a computer program developed by artificial intelligence company DeepMind to master the games of chess, Shogi, and Go) did when learning to play the game of Go. The dataset was generated automatically by simply having the computer play itself in thousands of games. However, the definition of who won and the parameters of the board were constructed by humans.

I don’t want to underestimate the accomplishments of convolutional neural networks (CNN) or AI in general. Sometimes even when operating under general limitations by humans, the machines can come up with useful rules that humans have missed. The AlphaZero CNN determined valuable moves that were good enough to beat the best players in the world. The aforementioned Stuart Russell suggested that a computer might be able to disable its “off-switch.”

Suppose a machine has the objective of fetching the coffee. If it is sufficiently intelligent, it will certainly understand that it will fail in its objective if it is switched off before completing its mission. Thus, the objective of fetching coffee creates, as a necessary subgoal, the objective of disabling the off switch.

However, as Maggiori pointed out, “why would anyone include the action ‘disable the off-switch’ as part of the available actions to try out for coffee delivery.” It is simply beyond the pale of machine learning: “Even if the action was allowed, the stars would have to align for the computer to ever try out that action and measure a significant positive impact in the efficiency of coffee delivery.”

What about deep learning with all of those hidden layers and the lack of specific information about how the neural network processes the data? Although we don’t know the details of the specific combination of filters, according to Maggiori we do know the neural network has limitations. Here is what he had to say about the filtering process in the neural network:

The training process starts with a completely random set of filters, so the initial model is generally useless. Afterward, it starts altering the filters progressively to find promising improvements. This is akin to an appointment with the eye doctor who tries out several glasses’ prescriptions, changing them little by little until finding the one you’re most comfortable with. But the process is much lengthier and more chaotic.

Unfortunately, because AI researchers cannot explain all the specific ways the hidden layers operate on the data, there is no guarantee what the network will spit out. Thus, the silly mistakes journalists enjoy finding in systems like ChatGPT and GPT-4, as when Maggiori asked GPT-3 “Who was the president of the UK last year?” The response was “The president of the UK was not elected last year.” As Maggiori wisely emphasizes, making changes for each mistake only seems to create additional mistakes. This is not so serious for word translations but can be tragic with autonomous vehicles.

You have probably heard of the deaths related to autonomous driving that reveal how easily AI can be fooled. In several cases, road signs have been slightly changed and the autonomous vehicles fail to recognize what is meant. Many of us have seen the effect graffiti has on a road sign. Most of the time, humans can still recognize the sign, but autonomous vehicles may not. Maggiori concludes that these failures are ultimately because CNN does not have a sophisticated “model of the world as we know it,” which probably explains why autonomous vehicles perform well in controlled environments but falter in the real world. Even Elon Musk has realized the difficulties in autonomous driving: “Generalized self-driving is a hard problem, as it requires solving a large part of the real-world AI. I didn’t expect it to be so hard, but the difficulty is obvious in retrospect.”

The underlying simplicity of AI coding makes me think we are a long way from reaching a singularity, especially after more than a decade of failures in attempting to get self-driving cars. At this stage any computer takeover appears to be science fiction, not applied science. More importantly, there are serious ramifications that would result from any government-mandated pause in AI development.

Why a Pause in Artificial Intelligence Could Be a Very Bad Idea

Last year, Interesting Engineering reported7 that Ni Yougjie, deputy director of the Shanghai Institute of Taiwan Studies stated: “PLA [the People’s Liberation Army of the People’s Republic of China] should conduct blockade exercises around the island and use AI technology to deter U.S. interference and Taiwanese independence forces.” He went on to say that the PLA should become a global leader in intelligent warfare by using “AI, cloud computing, big data, cyberattacks and defense.” The PLA has been simulating the invasion of Taiwan through AI war games for some time. The AI results suggest that the PLA would be unable to successfully invade Taiwan through 2026, but, ominously, the CIA reports that Chinese President Xi Jinping has ordered the PLA to be ready for an invasion by 2027.8

According to Gregory Allen, the director of the AI Governance Project at the Center for Strategic and International Studies: “China is not going to slow down its AI development in either the commercial or military domain.”9 Allen’s sentiments have been echoed by many think tanks, academics, and government intelligence personnel based on the massive investments China is making in AI and Chinese hints at an unwillingness to pause AI development.

Game theory is the introduction of mathematical models of possible strategic interactions assuming rational actors are involved. In a recent paper entitled Nuclear Deterrence in the Algorithmic Age: Game Theory Revisited,10 Roy Lindelauf (game theorist and professor working at the Ministry of Defense, the Netherlands) reminds us “game theory models prescribe what a decision maker ought to do in a given situation…and to alleviate the burden of human cognitive biases.”

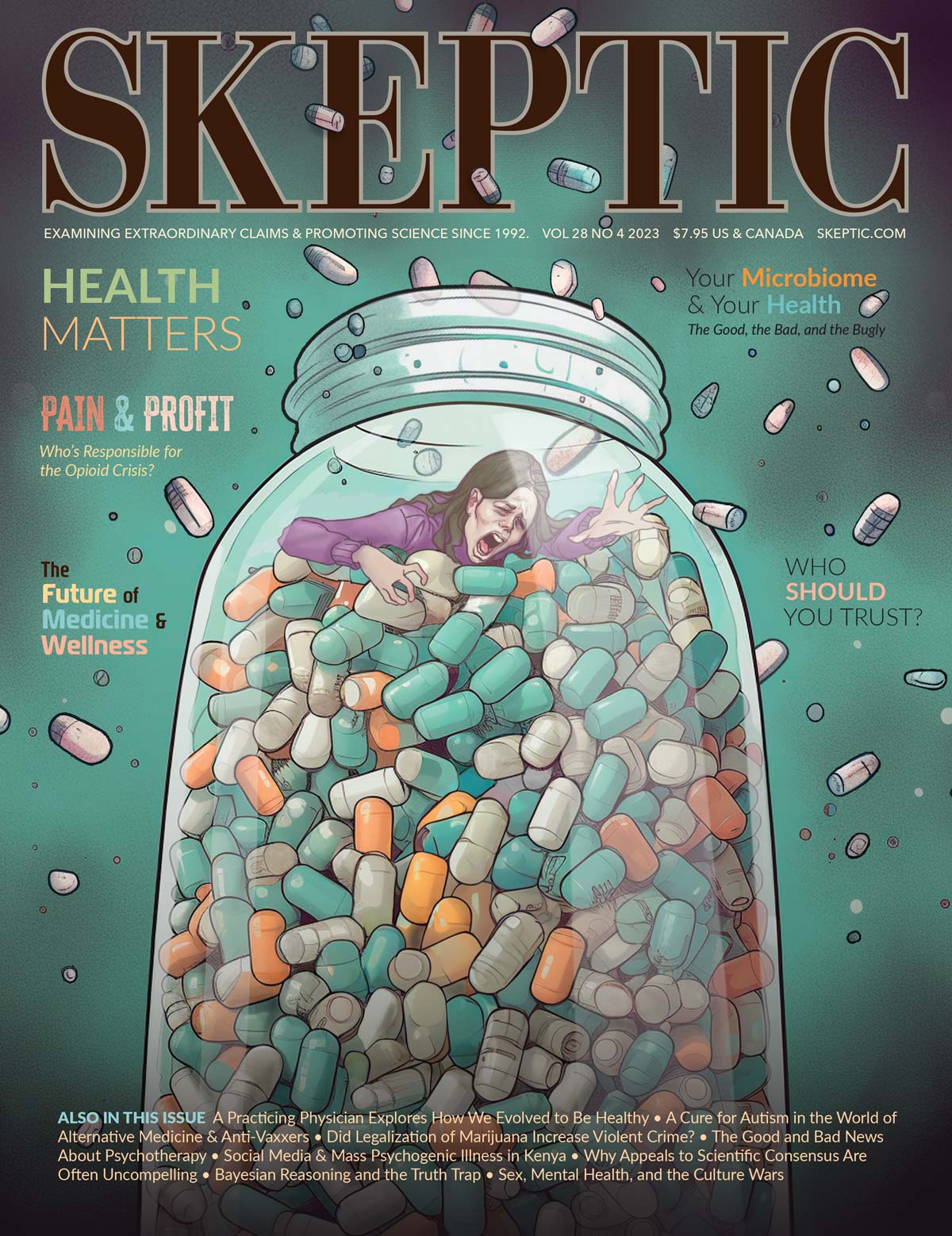

This article appeared in Skeptic magazine 29.1

Buy print edition

Buy digital edition

Subscribe to print edition

Subscribe to digital edition

Download our app

Credibility is the ultimate key in deterrence theory. Is a belligerent dissuaded from aggressive actions by the opponent’s threats? Ultimately, the best solution regarding the threat of an invasion of Taiwan is a Nash equilibrium (named after mathematician John Nash, the subject of the book and movie A Beautiful Mind) in which no one unilaterally benefits. If China believes they have superior AI, it may alter any existing Nash equilibrium; in the case of the development of AI, both sides are deterred from causing mutual destruction. In other words, no one has anything to gain by changing the status quo. However, that Nash equilibrium could dissolve if we pause AI research. In fact, it appears to me that there is a much bigger threat from the PRC than from any imminent takeover of humans by AI.

In 1950, Alan Turing proposed a test to determine whether a machine exhibits intelligent behavior at or beyond human level. An evaluator questions a human and a machine simultaneously without knowing which is which. If the evaluator cannot determine the difference between the human and the machine, the machine has passed his test. When it comes to pausing AI research, we should at least be dealing with machines that pass the Turing test. No artificial intelligence has ever passed it. ![]()

About the Author

Marc J. Defant is a professor of geology at the University of South Florida specializing in the study of volcanoes— more specifically, the geochemistry of volcanic rocks. He has been funded by the NSF, National Geographic, the American Chemical Society, and the National Academy of Sciences and has published in many international journals including Nature. His book Voyage of Discovery: From the Big Bang to the Ice Age is in the 2nd edition.

References

This article was published on May 24, 2024.