In this week’s eSkeptic:

NEW FROM DANIEL LOXTON ON SKEPTICBLOG.ORG

Thoughts About LogiCON 2011

On April 9, 2011, Daniel Loxton presented the keynote speech at LogiCON 2011 at the Telus World of Science in Edmonton, Alberta, Canada. Loxton shared his personal experiences as a paranormal believer and as a skeptical investigator later in life. This post is the follow-up summary of the event.

ABOVE: Daniel Loxton Keynote Address at LogiCON 2011. Photo by Mark Iocchelli

Debate: Is Religion the Problem?

Dinesh D’Souza versus Michael Shermer

Tuesday April 19, 2011 at 7pm

Free and open to the public

University of Florida (Auditorium)

(Union Road & Newell Drive)

Is belief in God a menace to civilization, as the new atheists contend? Would a secular world be a more rational, peaceful, and decent world?

The rise of Islamic radicalism has caused many people to blame Islam in particular, and religion in general, for the rise of modern terrorism. Historically, religion, and specifically Christianity, seem to have produced persecution and bloodshed. But is this a fair characterization?

Solution to last week’s photo

The solution to the Mystery Photo from April 6th is “Claude” — the magnificent albino beast (a miracle of evolutionary forces and random genetic mixing). He can be found at the San Francisco Academy of Sciences

We will reveal the answer to this week’s Mystery Photo in next week’s eSkeptic.

This week’s photo

From what year (or decade if you’re not sure) is this HP programmable calculator?

Hint: those yellow sticks are called pencils.

About this week’s feature article

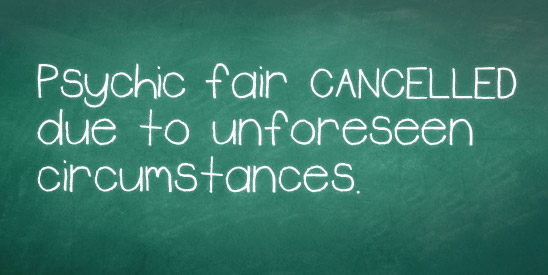

In a soon-to-be-published controversial paper entitled “Feeling the Future: Experimental Evidence for Anomalous Retroactive Influences on Cognition and Affect,” Daryl Bem claims to have found significant statistical data in support of precognition in various situations through a series of nine experiments. Nicolas Gauvrit presents several analyses critiquing the methodology and statistical data presented in Bem’s study.

SUBSCRIBE to Skeptic magazine for more great articles like this one.

Precognition or Pathological Science? An Analysis of Daryl Bem’s Controversial “Feeling the Future” Paper

by Nicolas Gauvrit

Renowned experimental psychologist Daryl Bem is about to publish an astonishing article in the prestigious Journal of Personality and Social Psychology (an online version is available now) entitled “Feeling the Future: Experimental Evidence for Anomalous Retroactive Influences on Cognition and Affect.”1 In this paper, Bem claims to prove precognition in various situations through a series of nine experiments. Well before its publication, this article is already being discussed, criticized, or acclaimed in the media (e.g., New York Times, January 10, 2011), and was even featured on The Colbert Report.

In most of these experiments, subjects were seated in front of a computer. The screen first showed a picture of two curtains side by side. Subjects were then asked to guess behind which curtain a picture was “hidden”. The target pictures to be found were either positive (e.g. smiling people), negative (e.g. car accident), neutral (e.g. a building), or “erotic” (e.g. man and woman having sexual intercourse). In fact, no picture was hidden, and the computer actually used a random method to determine, after subjects had spotted one side of the screen, on which side to display the picture. Bem claims that the subjects knew in advance which side would be randomly chosen, but since this happened after they made their selection it is an example of precognition or backwards time causality.

The basic idea behind Bem’s experiments is to produce reversed forms of classical psychological effects. For instance, a subliminal (or non-subliminal) presentation of a stimulus is known to facilitate the recognition of a subsequent related stimulus, an effect called “priming”: subjects are told to indicate as quickly as possible whether a series of letters (called a “target”) displayed on a screen is an English word or not. For instance, TABLE may appear, and subjects would say it is a word. Unbeknownst to the subjects, a prior subliminal presentation of a word, either “CHAIR” or “APPLE”, is briefly displayed on screen. The response time (delay in responding) is significantly shorter when subjects are exposed to “CHAIR”—an item related to the target—than when they are exposed to “APPLE”.

In experiments 3 and 4, Bem conducted a retroactive version of an affective priming experiment: a target picture is first displayed, for which subjects have to say if the picture is “pleasant” or “unpleasant”. A random priming word (either pleasant or unpleasant) appears afterwards on the screen.

Overall, four psychological effects (priming, facilitation of recognition, habituation, approach-avoidance) are reversed in time in Bem’s nine experiments. Except for retroactive induction of boredom (experiment 7), Bem claims that he found significant statistical data in support of precognition. However, criticisms have already been made concerning Bem’s paper, based on both methodological and statistical viewpoints.

James Alcock’s Methodological Critique

In an article in the March/April 2011 issue of Skeptical Inquirer, James Alcock highlights the extreme fuzziness of Bem’s methodology.2 Bem describes in detail a random generator based on physical processes, known to be more reliable than any pseudo-random software. He is indeed being cautious using such a generator. However, for some unexplained reason, not all his experiments are carried out using it.

Experiment 1 (detection of erotic stimuli) begins with each subject viewing a series of 12 erotic, 12 positive, and 12 negative pictures…but only for the first 40 participants. The last 60 subjects were exposed to 18 erotic pictures and 18 non-erotic but positive pictures. Alcock identifies two methodological irregularities among a long series. These non-conventional methodological techniques are puzzling. For example, Bem needs to know for classification purposes if pictures shown on the screen are positive or negative, and emotionally strong or not. The best way to achieve this goal is to select images from a reliable database, such as the International Affective Picture System.3 This Bem did…for “most of the pictures”. We do not have any indication of the proportion of pictures not taken from the databank, nor do we have details of the procedure used to classify these images as being “positive”, “neutral”, or “negative”.

The question naturally arises from this weird methodology: do we even know for sure that the classification was made before the experiment? If that is not the case, then of course data would be ad hoc. As well, Bem claims that his prior hypothesis in experiment 1 (where subjects have to find on which side of the screen a picture will appear) was that people would find the picture in more than 50% of the cases for erotic pictures. If this was really his hypothesis, why would he need to classify non-erotic pictures as either positive, negative, neutral, and non-erotic positive? The methodology strongly suggests that what is presented as an a priori hypothesis is in fact an a posteriori hypothesis, based on the results of the experiment.

This is not acceptable in research using statistics to prove a general hypothesis, for a reason linked with “risk”. Each statistical test (i.e. a method allowing, if it does, to “prove” a general hypothesis) indeed has a risk—usually 0.05, or 5%. This 5% risk means that (in Bem’s case) if there is no such thing as precognition, then the result of statistical tests will let us believe the contrary one in 20 times. Since we can imagine at least hundreds of tests, choosing the test afterward may induce misleading results…

Wagenmakers et al.’s Critique

This is, more or less, what Eric-Jan Wagenmakers and his colleagues claim in their paper that appears in the same journal as Bem’s article.4 Wagenmakers et al. argue that what is presented as a confirmatory analysis of some previous psi hypothesis is in a fact an exploratory analysis. This may seem meaningless at first glance, but the statistics involved in Bem’s paper are fundamentally confirmatory, and cannot be used that way.

Indeed, in an exploratory analysis, one does not know exactly which hypothesis to test. For instance, Bem may have had a feeling that, in some circumstances, people will show precognition. If he does not know before the experiment if this will be for erotic, negative or positive pictures, then he may proceed to a series of tests to accredit whatever situation will appear as the “good” one for psi phenomenon to show up. But if he does so (and so did he), since he uses several tests, the above argument shows that the results obtained cannot be taken as proving precognition. The usual way to deal with such a situation is to consider any result coming out from this kind of study as “exploratory”, i.e. preliminary studies. The right conclusion would then not be “there is precognition with erotic pictures,” but more likely “if there is precognition, it is more likely to appear with erotic pictures. Now, one has to build up an experiment to test precognition with erotic pictures….”

Wagenmakers et al. go even further, arguing that when psi or any other improbable effect is involved, one should take into account the multitude of experimental failures to prove it. This cannot be achieved through classical statistical testing (except through meta-analysis). For that reason, Wagenmakers et al. advocate for the use of a method based on totally different basis. Their arguments are certainly well-advised. But such a change of paradigm is not necessary to deny the link that Bem sees between his data and conclusions. Actually, as we will see, a classical statistical approach suffices to show that the very data exhibited by Bem does not support the final claim of precognition.

Multiple Testing

Classical statistical testing is based on a simple idea. It aims at refuting a so-called “null hypothesis.” In Bem’s case, the null-hypothesis is that there is no such thing as precognition, something Bem wishes to prove false. As in any scientific experiment, we begin by assuming that the null hypothesis is true, and reject it only if the data show statistically significant differences between conditions and we can safely rule out chance as an explanation. That is, the observed experimental result has a probability (the so-called “p-value”) of less than 5%. This means that if the null hypothesis is true that the effect is nonexistent, there is still a 5% probability that it will be observed anyway, by chance. This classical testing method is bound to fail (if, again, the null hypothesis is actually true) once in every 20 attempts. For this reason, statistical handbooks for researchers recommend (1) use only one test for each hypothesis and (2) choose the test before the experiment begins. This is not what Bem did. For instance, he cites using as many as 10 tests for testing precognition in experiment 1, and 8 in experiment 2.

Correction procedures for multiple testing do exist. Social scientists are normally taught to use such procedures whenever they have to use several tests for the same hypothesis—a situation that should rarely occur. One way of achieving multiple-testing correction is to divide the maximum admissible risk of each test by the number of tests being considered. For instance, considering experiment 1 from Bem’s article, this would imply concluding that there is proof of precognition only if at least one particular test (but possibly all of them) shows a p-value of less than 0.5% (5/10=0.5). No test meets that criterion in Bem’s first experiment.

However severe this might seem, it is still not enough. The correct number to use is not the number of tests actually mentioned in the article, but the number of tests from which the author would have derived the conclusion. In the case of the Bem’s first experiment, this is far more than the number of 10 tests acknowledged by the psychologist. First of all, every test in the paper is made unilaterally (see below). In experiment 5, for example, Bem conducts a retroactive habituation task: subjects are asked to indicate which of two pictures they prefer, and are afterward exposed to a random picture chosen among the two prior stimuli (for instance, a car accident and a broken leg). In a traditional habituation task, the target is presented first several times and leads either to an increased preference of that same target (simple exposure effect) or to a decreased preference (boredom effect), depending on many factors including the number of repetitions. Here, Bem says that he is testing for a simple retrograde exposure effect.

Doing a unilateral test means that had the subjects chosen the target with a probability significantly less than 50%, Bem would not have concluded that this was proof of precognition. But this would, of course, be either an example of negative psi or an example of a boredom effect…thus a proof of precognition in both cases. We therefore have good reasons to believe that such a result would have actually led the psychologist to conclude he has proof of precognition via a retrograde boredom effect, or negative psi. Conducting a unilateral test chosen a posteriori is equivalent to doing two unilateral tests. Since Bem did not have a good reason to believe that a probability significantly less then 50% would not be a proof of precognition, we must think that he actually chose the “right” test after the experiment had been finished. This amounts to doing two tests: one to check if the observed percentage is significantly more than 50%, one to check if it is significantly less.

In experiment 1, Bem tests for negative, neutral, positive, romantic but non-erotic, and erotic pictures. This would make 5 tests. But Bem did 2 tests in each case (a so-called t-test, and a binomial test), leading to 10 tests.

Table 1 shows for each experiment the number of tests involved, the least risk for which at least one test mentioned leads to a “proof of precognition,” and the maximum p-value after correction for multiple-testing, considering the number of tests Bem would probably have accepted, based on the number of tests actually mentioned.

| Experiment Number | Number of tests mentioned | Max. p-value (%) | Best p-value observed (%) | |

|---|---|---|---|---|

| 1 | 20 | .25 | 1 | |

| 2 | 48 (2 groups, 3 types of pictures, 4 tests) |

.10 | .9 | |

| 3 | 16 (2 types of pictures, 4 tests) |

.31 | .7 | |

| 4 | 8 (2 cutoffs, 2 transformations) |

.62 | 1.4 | |

| 5 | 24 (2 genders, 3 types of pictures, 2 tests) |

.21 | 1.4 | |

| 6 | 32 (2 genders, 4 types of pictures, 2 tests) |

.16 | 3.7 | |

| 7 | 16 (4 tests, 2 types of pictures) |

.31 | 9.6 | |

| 8 | 6 (3 groups) |

.82 | 2.9 | |

| 9 | 6 (3 groups) |

.82 | .2* | |

| ALL | 176 | .03 | .2 |

Table 1 For each experiment, the number of tests mentioned in Bem’s article is multiplied by a 2-factor to correct for unilateral-testing, and the corresponding corrected maximum p-value (or risk one should accept) and the best p-value given by Bem are reported. Note that the corrected risk calculated here may well be over-estimated. The last line shows the same analysis considering every test in Bem’s article as an attempt to prove precognition.

Bem claims to have produced 8 proofs of precognition through 9 experiments (he only recognizes a lack of conclusive results for experiment 7). After a correction procedure for multiple-testing, only 1 of the nine experiments still shows what looks like a significant result.

Is there, then, one proof of precognition? This is far from sure. Before coming to such a conclusion, one must indeed consider (as Bem himself admits) that experiments 8 and 9 test the same hypothesis of retroactive facilitation of recall: should we then not separate them, and look at experiments 8 and 9 together?

Even more, shouldn’t we consider that all nine experiments together test the same precognition-hypothesis? Doing so, we face 176 tests attempting to prove precognition, as displayed in the last line of Table 1. The maximum admissible p-value is then .03%, far less than the best value Bem reports (.2%). From this we may reasonably conclude that there is no statistical proof of precognition in Bem’s study!

Wagenmakers et al. wisely advise distinguishing between exploratory studies and confirmatory studies. A work such as Bem’s is the consequence of not following that piece of advice. Being generous, we may say that Bem’s research is an investigation of what might be true in various precognition conditions. Since only one situation leads to suggestive results, it is now time to test it, following the golden rule of statisticians “One hypothesis, one test—chosen prior to the experiment.”

We must admit that the statistical flaw in Bem’s article often show up in social science. Psychologists often publish many tests around the same hypothesis. But there is, however, a big difference between psychological science and parapsychology. When a psychologist claims to have a proof of an unexpected strange phenomenon, many other psychologists attempt to replicate the experiment. So do parapsychologists. But when psychologists fail to reproduce a conclusion, they abandon their previous hypothesis (such as the “Mozart effect”), whereas parapsychologists show a tendency to explain the failure by other factors than their previous error. They may, for instance, invoke the presence of a skeptic’s mind around the laboratory (James Randi has been so accused). Social scientists and parapsychologists share some bad statistical habits, but social scientists have efficient correction-methods that parapsychologists do not.

References

- Bem, D. 2011 (in press). “Feeling the Future: Experimental Evidence for Anomalous Retroactive Influences on Cognition and Affect.” Journal of Personality and Social Psychology.

- Alcock, J. 2011. “Back from the Future: Parapsychology and the Bem Affair.” Skeptical Inquirer, March/April.

- Lang P. J., Greenwald M. K. 1993. International affective picture system standardization procedure and results for affective judgments. Gainesville, FL: University of Florida Center for Research in Psychophysiology.

- Wagenmakers E. J., Wetzels R., Borsboom D., van der Maas H. (in press). “Why Psychologists Must Change the Way They Analyze Their Data: The Case of Psi.” Journal of Personality and Social Psychology.

Skeptical perspectives on psychics, ESP and magic…

-

Flim Flam! Psychics, ESP, Unicorns and other Delusions

Flim Flam! Psychics, ESP, Unicorns and other Delusions

by James Randi

-

Guidelines for Testing Psychic Claimants

Guidelines for Testing Psychic Claimants

by Richard Wiseman and Robert L. Morris -

Palm readers, astrologers and those who claim they can talk to the dead make the rounds of national talkshows. Even police departments enlist the services of “psychic” detectives. But what proof do we have that any of these claims are real? Richard Wiseman and Robbert Morris provide helpful and professional guidelines to help health professionals, law enforcement agencies, cult investigators, scientists, and the public at large assess those who make psychic claims. READ more and order the book.

Be sure to check out Shop Skeptic’s section of lectures on the topics of psychics, ESP and magic.